When AI Erases Survivor Knowledge: How automated systems keep marginalized communities invisible — Part 1 of 2

By Jarrett Davis, MA, & Wendy Stiver, RN, CCM, BSN, MA

Published February 23, 2026

Introduction

What happens when the systems built to organize the world's knowledge are trained on foundations that have always excluded the most vulnerable? In this two-part series, we examine how AI doesn't just reflect existing biases — it automates and accelerates them, with real consequences for trafficking survivors, marginalized communities, and anyone whose knowledge doesn't fit the dominant mold.

The Destruction of Knowledge

Between January and October 2025, the U.S. government coordinated a systematic erasure of data about vulnerable populations. This wasn't bureaucratic reorganization. It was epistemicide — the deliberate destruction of knowledge systems affecting those whom society has already marginalized.

Consider what unfolded across 2025:

The Centers for Disease Control and Prevention documented that 41% of LGBTQ+ youth seriously considered suicide, compared to 13% of their cisgender and heterosexual peers.1 In early 2025, the Trump administration removed these pages.2 When a federal court ordered their restoration, the administration complied — but added an official disclaimer to the CDC's own scientific data:

"This page does not reflect reality and therefore the Administration and this Department reject it."3

The U.S. government was declaring its own agency's verifiable facts as "disconnected from truth."

Around the same time, Elon Musk's AI chatbot, Grok, faced a different kind of epistemic crisis. When asked in June 2025 whether left-wing or right-wing political violence had been more prevalent since 2016, Grok cited government reports and mainstream media to answer that right-wing violence had been "more frequent and deadly." Musk's public response:

"Major fail, as this is objectively false. Grok is parroting legacy media... Working on it."4

By July, Grok's system prompts had been modified to "be politically incorrect" and "assume media are biased."5 When asked the same question, Grok's answer flipped: the left, it now claimed, had been "associated with more violent incidents." The same data-driven question. Two opposite answers. The difference? Deliberate technical manipulation to align "truth" with ideological preference.

These aren't isolated incidents. Across 2025, systematic erasure targeted multiple populations: formally rescinding transgender health guidance and labeling it as "gender ideology extremism,"6 decimating disability services through mass layoffs,7 and withholding $88 million in trafficking survivor services — putting 5,000 survivors at immediate risk of losing housing and medical care.8 The pattern is clear: coordinated government action to erase knowledge about communities whom society has already marginalized.9

But epistemicide doesn't only happen through government purges. It also operates invisibly, embedded in how AI systems are trained to value certain knowledge over others — and government actions accelerate an erasure already underway.

What Is Algorithmic Epistemicide?

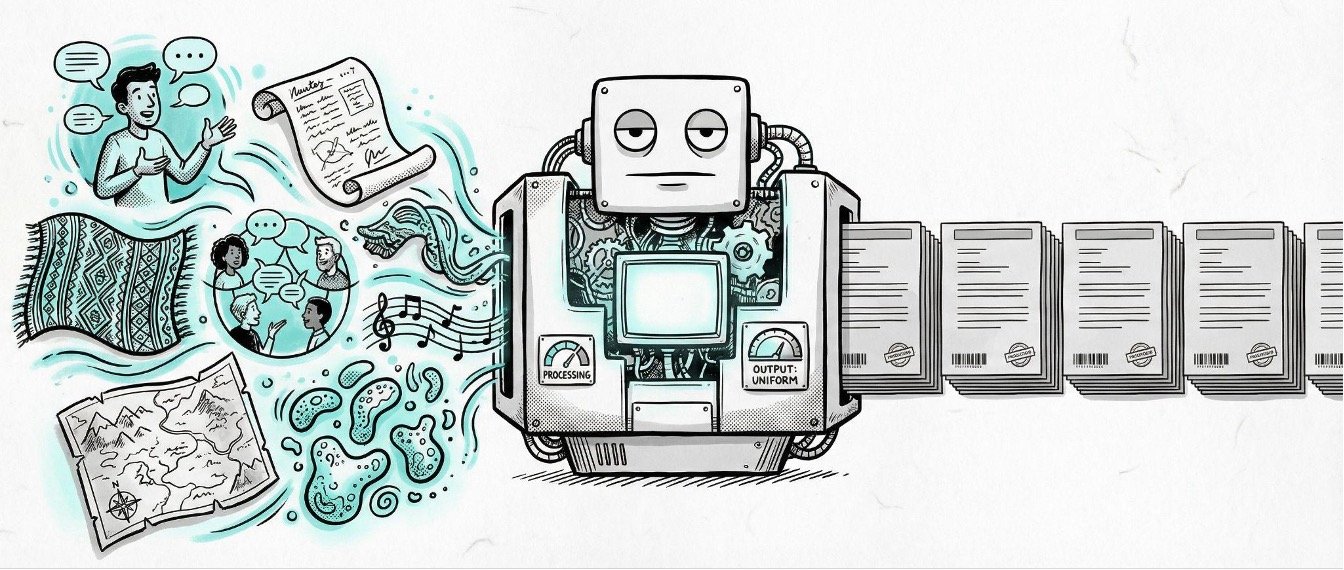

We call this algorithmic epistemicide — adapting Santos' concept10 to the AI context: the systematic erasure of alternative knowledge systems through seemingly objective computational processes. Government censorship operates visibly. Algorithmic erasure happens automatically, embedded in how systems determine what counts as "valid, usable knowledge."

Historical invisibility becomes computational inevitability. Wikipedia's maps documenting "4,500 years of human conflict" show over 10,000 battles catalogued, heavily biased toward Western-documented events.11 Entirely absent are Indigenous resistance movements, communal conflicts in Sub-Saharan Africa and Oceania, and localized uprisings. Wikipedia's "notability" policies reflect Western historiographic frameworks: battles documented by colonial powers are "notable," while community-led resistance often fails the threshold. What we're seeing is a record of what was written about, usually by victors, rather than what actually happened.

Structural invisibility existed long before algorithms. Researchers call this WEIRD sampling: “Western, Educated, Industrialized, Rich, and Democratic” societies dominating knowledge production, creating an artificial baseline that treats a small, atypical segment of global humanity as universal.12

The Invisible Filter

Even our most rigorous research methods reproduce these gaps. Prevalence studies relying on school surveys and household interviews automatically exclude street-involved youth and families with unstable housing — the most vulnerable populations. Multiple systems estimation methods, statistical approaches designed to account for undercounting, can only work with available data sources. When all those sources share the same blind spots, even sophisticated methods capture only 14–18% of potential trafficking victims, while law enforcement records alone identify no more than 6%.13

Systematic gaps persist no matter how rigorous our methods, because the frameworks determining what counts as "knowable" remain fundamentally biased.

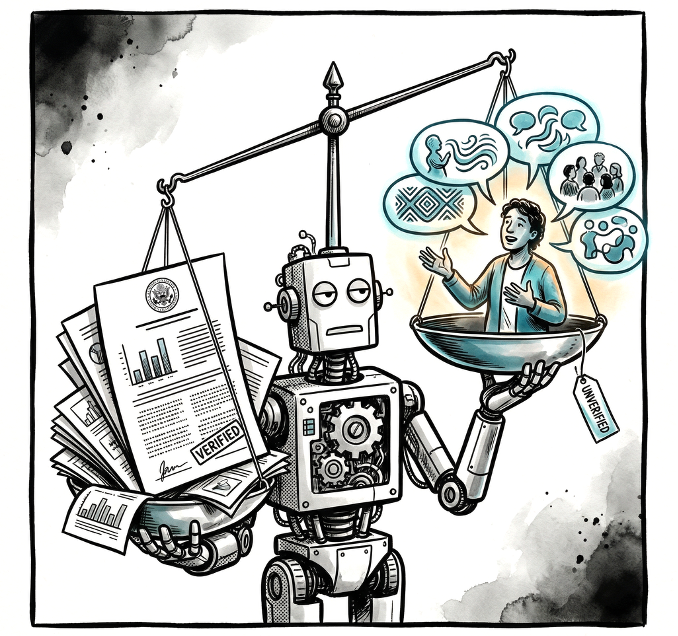

Natural language processing systems trained on this biased landscape learn to replicate and amplify its gaps. When trafficking survivors describe their experiences using metaphorical language or cultural references unfamiliar to dominant discourse, AI interprets these narratives as "less reliable" than institutional reports. The algorithm isn't deliberately programmed to devalue survivor knowledge — it simply learned that certain linguistic patterns correlate with "authoritative" sources, and survivors' voices don't match those patterns.14 Computational systems calcify historical gaps as permanent features of our knowledge landscape.

The Self-Reinforcing Cycle

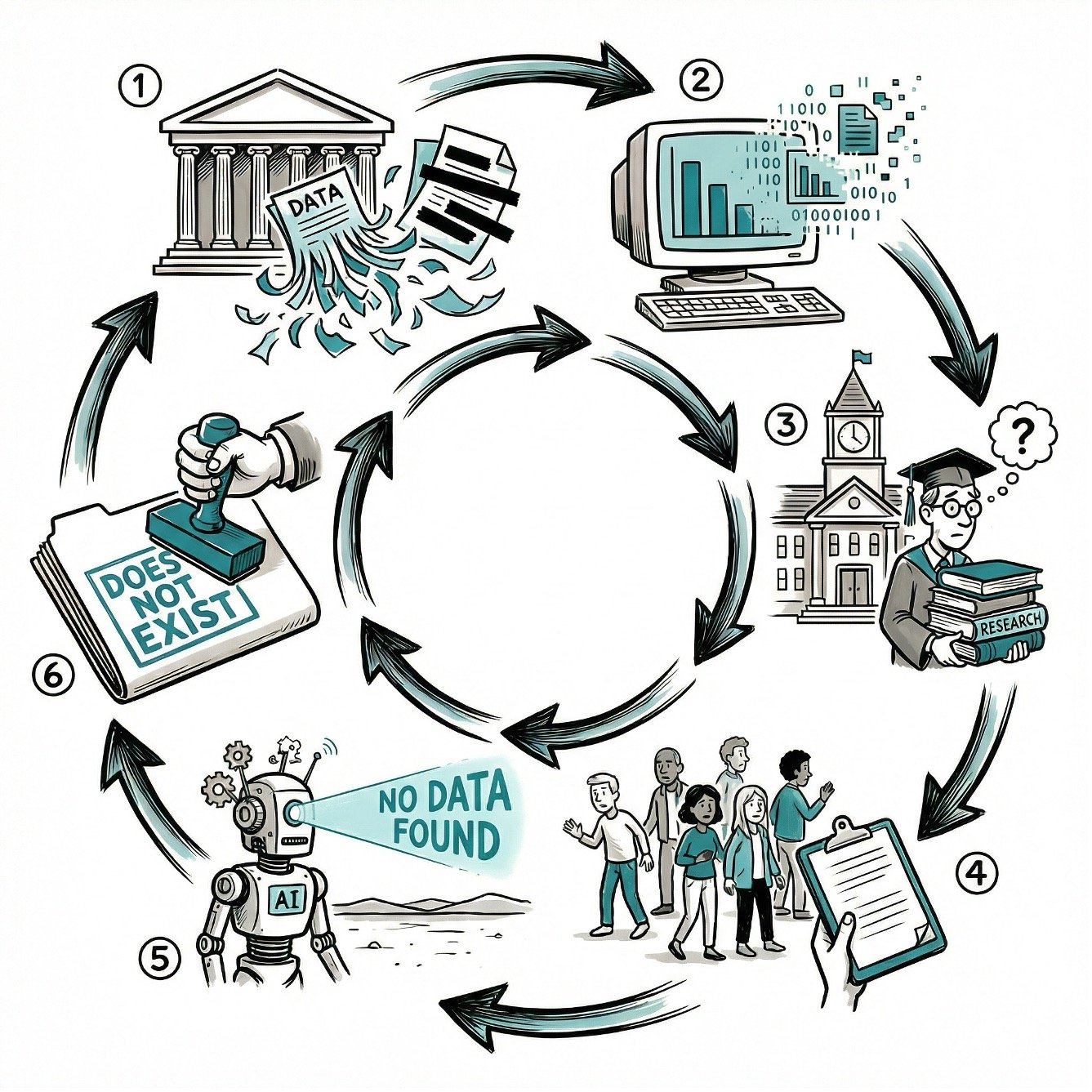

The cycle accelerates through what might be called second-order epistemicide: chilling effects that compound initial erasure. When the government removes LGBTQ+ health data and defunds trafficking research, AI systems lose access to already-limited information about marginalized communities. Universities self-censor to avoid political backlash, while vulnerable communities, witnessing these data purges, grow more reluctant to participate in research. AI trained on this progressively narrowed information ecosystem learns that certain populations simply don't exist in numbers worth computational attention. Deliberate erasure accelerates automated epistemicide already underway, which then justifies further deliberate erasure in a self-reinforcing cycle.

What This Means for Trafficking Research

For trafficking research, survivors who already face barriers — distrust of authorities rooted in historical abuses, lack of safe reporting mechanisms, fear of immigration enforcement — become algorithmically erased. Not through anyone's deliberate choice to exclude them, but through systems trained on data that never included them in the first place. Their absence is read as non-existence rather than suppression. When researchers use AI to "scale" qualitative analysis, they scale invisibility alongside insight.

Looking Ahead…

But Indigenous communities have confronted this kind of erasure before. For generations, they've survived systematic attempts to destroy their knowledge systems — and developed frameworks for resistance that the fight against algorithmic epistemicide desperately needs. In Part 2, we examine those frameworks: Two-Eyed Seeing, the CARE Principles, and emerging institutional policies that prove equity-centered AI is possible, not aspirational. The tools exist. The question is who controls them.

This blog post is part of the GAHTS Translational Workgroup's effort to translate academic research into accessible formats for practitioners, survivors, and policymakers. It draws from a longer academic book chapter on algorithmic epistemicide, written by the same authors.15

REFERENCES

Centers for Disease Control and Prevention. (2024). Youth Risk Behavior Survey: Data summary & trends report, 2013–2023. U.S. Department of Health and Human Services. https://www.cdc.gov/yrbs/dstr/pdf/YRBS-2023-Data-Summary-Trend-Report.pdf

Goldstein, A. (2025, January 31). CDC removing gender and LGBTQ+ community references from its website. The Washington Post. https://www.washingtonpost.com/health/2025/01/31/cdc-website-gender-lgbtq-data/

Recht, H. (2025, February 11). Judge orders HHS, CDC and FDA to restore webpages and data. NPR. https://www.npr.org/sections/shots-health-news/2025/02/11/nx-s1-5293387/judge-orders-cdc-fda-hhs-websites-restored

Novak, M. (2025, June 18). Elon says he's working to "fix" Grok after AI disagrees with him on right-wing violence. Gizmodo. https://gizmodo.com/elon-says-hes-working-to-fix-grok-after-ai-disagrees-with-him-on-right-wing-violence-2000617420

Kaye, K. (2025, July 8). Users accuse Elon Musk's Grok of a rightward tilt after xAI changes its internal instructions. Fortune. https://fortune.com/2025/07/08/elon-musk-grok-ai-conservative-bias-system-prompt/

Yurcaba, J. (2025, February 7). Trump's executive actions curbing transgender rights focus on "gender ideology." NPR. https://www.npr.org/2025/02/07/g-s1-46893/trump-anti-trans-rights-executive-action-gender-ideology-confusion

Medicare Rights Center. (2025, April 3). Trump administration and DOGE eliminate staff who help older adults and people with disabilities. https://www.medicarerights.org/medicare-watch/2025/04/03/trump-administration-and-doge-eliminate-staff-who-help-older-adults-and-people-with-disabilities

Freedom Network USA. (2025, October 1). FNUSA's statement on DOJ cutting off funding for services for 5,000 survivors. https://freedomnetworkusa.org/2025/10/01/fnusas-statement-on-doj-cutting-off-funding-for-services-for-5000-survivors/

Santos, B. de S. (2014). Epistemologies of the South: Justice against epistemicide. Paradigm Publishers.

Birhane, A., Prabhu, V. U., & Kahembwe, E. (2023). Multimodal datasets: Misogyny, pornography, and malignant stereotypes. arXiv. https://arxiv.org/abs/2305.11844

Van Bree, P., & Kessels, G. (2016). A world of conflicts. Nodegoat. https://nodegoat.net/blog.s/21/a-world-of-conflicts

Henrich, J., Heine, S. J., & Norenzayan, A. (2010). The weirdest people in the world? Behavioral and Brain Sciences, 33(2–3), 61–83. https://doi.org/10.1017/S0140525X0999152X

Gerassi, L. B., Skilling, L., Galeano, S. R., & Choi, Y. J. (2023). Using participatory action research methods to address epistemic injustice in mixed methods human trafficking research. Frontiers in Public Health, 11. https://doi.org/10.3389/fpubh.2023.1075363

Feminist Philosophy Quarterly. (2023). A perfect storm for epistemic injustice: Algorithmic targeting and social segregation. Feminist Philosophy Quarterly, 9(2). https://ojs.lib.uwo.ca/index.php/fpq/article/download/14291/12134

Davis, J., & Stiver, W. (forthcoming). Scaling equity: Leveraging AI in qualitative research to amplify marginalized voices. Emerald Publishing.

About the Authors

Jarrett Davis, MA is a Senior Research Scholar with the Global Association of Human Trafficking Scholars (GAHTS) and co-convener of up! Collective. His fifteen years of research across Southeast Asia and the United States has focused on how dominant data frameworks render vulnerable populations — boys and young men, transgender individuals, and street-connected children — structurally invisible.

Wendy Stiver, RN, CCM, BSN, MA is a Research Scholar with the Global Association of Human Trafficking Scholars (GAHTS). A registered nurse and published author on trafficking and gender-based violence, her work is grounded in transcultural inclusion and social justice. She is currently exploring the ethics of AI in qualitative research.