"Resisting Algorithmic Epistemicide": How Indigenous frameworks and community governance can fight back — Part 2 of 2

By Jarrett Davis, MA, & Wendy Stiver, RN, CCM, BSN, MA

Published March 30, 2026

This is Part 2 of our series on algorithmic epistemicide. In Part 1, we examined how AI systems systematically erase marginalized knowledge—not through deliberate exclusion, but through training on data that never included certain communities in the first place. Government data purges in 2025 accelerated this erasure, but the problem runs deeper: computational systems calcify historical invisibility as permanent features of our knowledge landscape. Survivors’ voices don’t match the linguistic patterns AI learned to trust, so their absence is read as non-existence rather than suppression. But erasure isn’t the end of the story.

Introduction

In Part 1, we mapped the problem: how algorithmic systems transform historical invisibility into computational inevitability, creating a self-reinforcing cycle where erasure justifies further erasure. But Indigenous communities have confronted this kind of erasure before. For generations, they’ve survived systematic attempts to destroy their knowledge systems—and developed frameworks for resistance that the fight against algorithmic epistemicide desperately needs.

This blog post examines those frameworks—Two-Eyed Seeing, the CARE Principles, and emerging institutional policies—and asks what they demand of researchers, practitioners, and institutions working in trafficking and exploitation. The tools for equity-centered AI exist. The question is who controls them, and whether institutions will implement them structurally or treat them as aspirational add-ons.

Indigenous Frameworks as Resistance

Indigenous communities have confronted this kind of erasure before. For generations, they’ve survived systematic attempts to destroy their knowledge systems—and developed frameworks that researchers working against epistemicide desperately need.

Resistance is innately human, not exceptional. As Jewish humor has it: “They tried to kill us. We won. Let’s eat.” Indigenous populations worldwide demonstrate the same resilience, the same survival. African American communities during slavery faced systematic attempts to eradicate their epistemologies, so they embedded knowledge into music, storytelling, folk songs—communicating vital information to those who knew how to decode it. Feminist scholars, through work like Women’s Ways of Knowing (Belenky et al., 1986)1, challenged male-centric epistemological frameworks by centering personal experience, relationship, and narrative as valid forms of knowledge. These resistance patterns have continued across centuries in different forms, because the impulse to preserve knowledge and draw toward collective survival runs deeper than any attempt to suppress it.

When Indigenous communities confronted the crisis of Missing and Murdered Indigenous Relatives—thousands of cases ignored or mishandled by authorities—they didn’t just critique federal data failures and systematic erasure. They built alternatives grounded in their own epistemologies, creating frameworks (MMIR) that offer concrete tools for researchers working against epistemicide.

Two-Eyed Seeing

Two-Eyed Seeing (Etuaptmumk), developed by Mi’kmaw Elder Albert Marshall2, provides an organizing principle:

“Learning to see from one eye with the strengths of Indigenous knowledges and ways of knowing, and from the other eye with the strengths of Western knowledges and ways of knowing, and to using both these eyes together.”

The framework establishes co-equal holding rather than integration that subsumes Indigenous knowledge into Western frameworks. Same reality, different interpretive tools, both valid. For trafficking research, survivor knowledge systems and conventional risk assessments inform each other without hierarchy. Community oral histories and institutional databases. Relational understanding and statistical patterns. Trust valued equally with quantified metrics.

Two ways of seeing: Indigenous knowledge and Western analysis produce something richer together than either can alone.

The CARE Principles

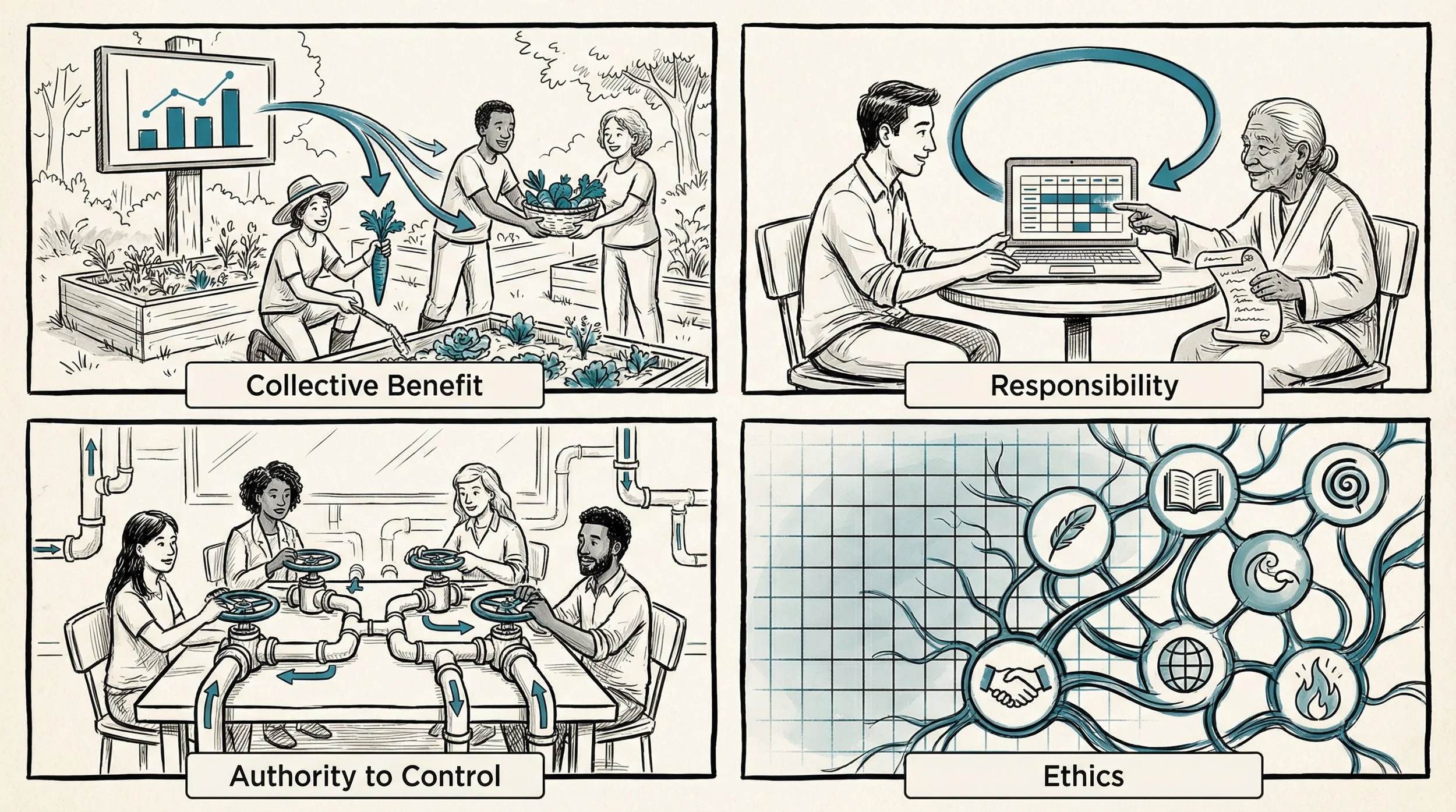

The CARE Principles—developed by the Global Indigenous Data Alliance in 2019 3—translate this philosophy into data governance:

Collective Benefit moves beyond “do no harm” to actively designing research for community wellbeing. AI systems trained to detect strengths and resilience alongside vulnerabilities. Pattern recognition for protective factors, not just deficits.

Authority to Control repositions survivors as rights-holders, not stakeholders. Community review boards can override AI interpretations missing cultural context. Data governance controlled by those whose experiences are studied.

Responsibility requires ongoing relationships beyond single studies. Data repatriation. Long-term partnerships. Reciprocity, not extraction.

Ethics means situational, community-defined standards rather than rigid universal protocols—for example, ensuring AI systems respect Indigenous data sovereignty requirements or honor community consent processes before analyzing sensitive information.

The CARE Principles for Indigenous Data Governance

These frameworks are beginning to shape trafficking research practice in concrete ways. The challenge is real: when West African or Caribbean immigrant communities use susu—traditional rotating savings and credit associations where members pool money and take turns receiving payouts—algorithms often flag this as suspicious. Culturally informed approaches would recognize legitimate financial practice while still detecting exploitation. Achieving this requires what Two-Eyed Seeing describes: survivor advocacy groups contributing expertise alongside technical development, community knowledge and technical expertise seeing together. The frameworks exist. The question is whether institutions will implement them structurally or treat them as aspirational add-ons.

Proving it’s Possible—With Caveats

Some institutions aren’t treating these frameworks as aspirational. Between 2020–2025, major research funders and governments implemented Indigenous data sovereignty principles as enforceable requirements—demonstrating that equity-centered AI is achievable when sovereignty is structurally embedded.

The U.S. National Science Foundation now requires mandatory tribal approval for Arctic research (May 2024)4—not consultation, but veto authority. Proposals must include compensation budgets for community participation. The Navajo Nation Human Research Review Board 5, operational since 1996, enforces a 12-phase approval process ending with mandatory data transfer to tribal custody. Australia’s Government Indigenous Data Framework 6, mandatory across all federal agencies as of January 2025, requires seven-year implementation plans and Indigenous data governance training for all public servants. Canada’s $2.1 billion Indigenous AI fund 7 supports community-controlled technology labs, reversing decades of extractive research.

All are binding requirements with enforcement mechanisms, not pilot projects.

But a critical tension remains. These policies operate within Western institutional frameworks—federal agencies, research protocols, legal mechanisms. They’re attempting to embed Indigenous epistemologies within structures historically designed to privilege Western knowledge systems. Can institutions built for knowledge extraction truly become vehicles for sovereignty? Or does genuine transformation require building entirely new structures from the ground up, rather than retrofitting existing ones?

These policies are anti-exploitative. They close gaps, bring communities closer to decision-making. But do they solve epistemicide, or prevent further harm while leaving fundamental power structures intact? How deep does institutional commitment go when political pressure mounts?

We don’t have complete answers. But we know that the values and frameworks presented here can move us closer. Sometimes the right question matters more than immediate solutions—it gives others something to pick up and run with. These institutional examples indicate to us that equity-centered AI isn’t a fantasy. The frameworks exist. Implementation is happening. The question is whether this represents genuine transformation or sophisticated accommodation.

What We Must Demand

Clearly, this issue is much bigger than just human trafficking research. The same algorithmic systems erasing LGBTQ+ youth suicide data, purging disability research, defunding trafficking services, and silencing Indigenous knowledge are part of the same structural problem requiring interconnected resistance. But we’re applying these frameworks to trafficking and exploitation because that’s where we work—and because these fields are fundamentally interconnected.

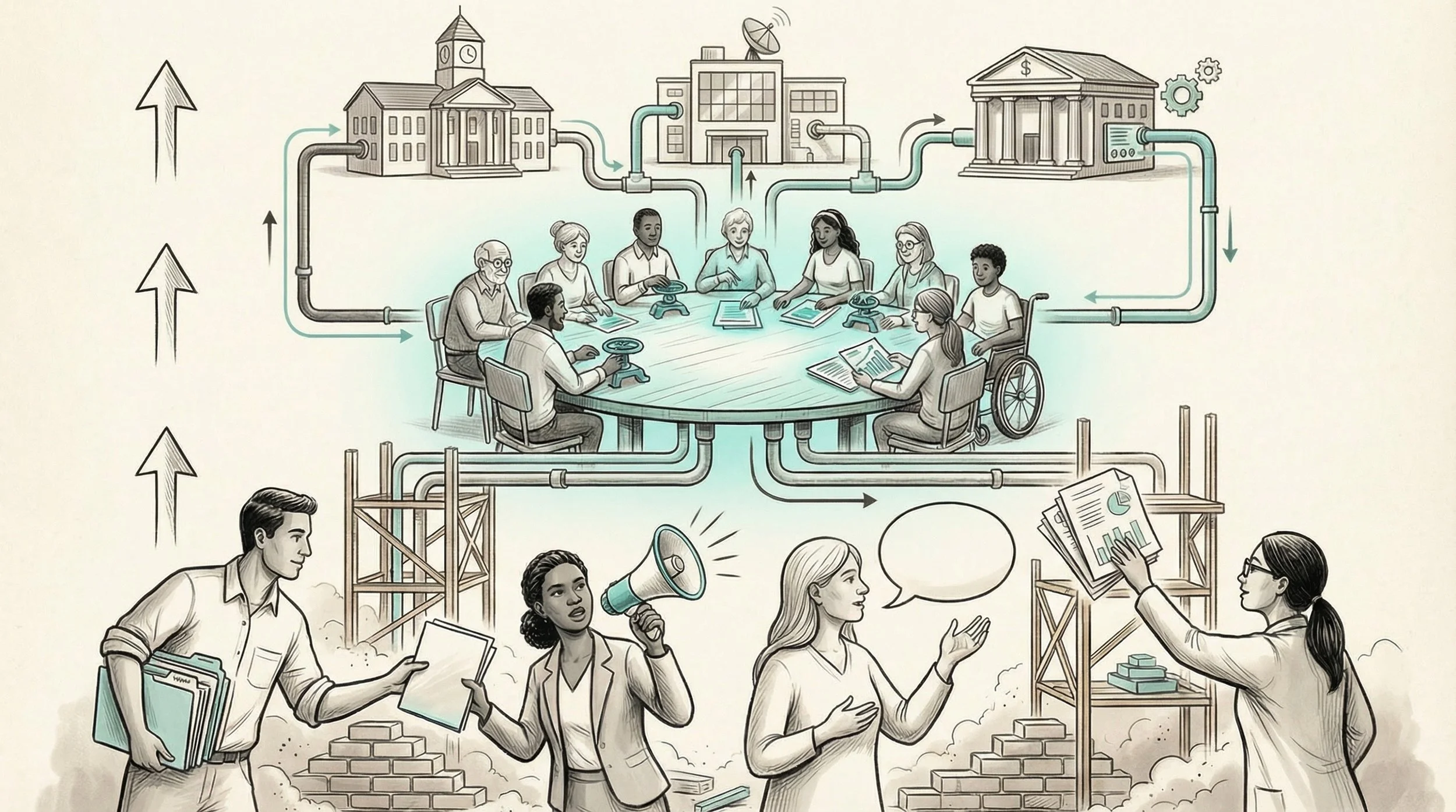

The question goes beyond technical fixes. Audre Lorde reminded us that “the master’s tools will never dismantle the master’s house.”8 Power reconfiguration requires institutional transformation—changing who controls knowledge production, not just how algorithms function.

Power reconfigured: community knowledge and governance flowing upward to reshape institutional structures.

Those who control resources and infrastructure must act first:

Funders must grant rights-holder status in grant requirements—decision-making authority, not “stakeholder consultation.” Compensation mandates for community governance participation. Veto authority over research that doesn’t serve community needs.

Tech companies need community governance structures with binding authority, not advisory boards. Data sovereignty protections built into platforms from the ground up. Transparent algorithmic decision-making that communities can audit.

Institutions—universities, institutional review boards (IRBs), research organizations—should establish data repatriation protocols. Community review boards with override authority on publications and interpretations. Infrastructure investment addressing digital divides, supporting community-controlled digital archives that preserve oral traditions and diverse knowledge systems in formats accessible to both AI systems and cultural practitioners.

Researchers ourselves must recognize we need to cede control, not just “include” voices. Willingness to be accountable beyond publication cycles. Acknowledgment that our expertise is partial—community knowledge is equally valid, differently structured. Commitment to changing who has power, not just improving how we use ours.

But institutions rarely transform themselves. Those most affected hold knowledge that power-holders lack—and the moral authority to demand change.

Practitioners see daily what algorithms miss. Document when risk assessments fail. Question every tool: who built it, what data trained it, whose experiences are absent? Create space for narratives that don’t fit institutional forms—often the most important data.

Advocates can demand transparency when AI shapes detection, benefits, or housing decisions. Build coalitions—the systems erasing trafficking survivors are the same ones erasing LGBTQ+ youth, disabled people, and Indigenous communities. Center survivor leadership not as consultation but as governance.

Survivors hold expertise no algorithm can replicate. Your knowledge is valid even when systems refuse to recognize it. Peer networks are themselves resistance—ways of knowing that persist outside institutional capture. You have the right to know how your data is used, and by whom.

Two-Eyed Seeing, CARE principles, and sovereignty requirements offer pathways for working against algorithmic epistemicide. AI can concentrate power in institutions that have historically marginalized communities, or it can create space for epistemic justice. The difference isn’t the technology—it’s who controls it, and who holds them accountable.

This blog post is part of the GAHTS Translational Workgroup's effort to translate academic research into accessible formats for practitioners, survivors, and policymakers. It draws from a longer academic book chapter on algorithmic epistemicide, written by the same authors.9

References

Belenky, M. F., Clinchy, B. M., Goldberger, N. R., & Tarule, J. M. (1986). Women’s ways of knowing: The development of self, voice, and mind. Basic Books. https://philpapers.org/rec/BELWWO-2

Bartlett, C., Marshall, M., & Marshall, A. (2012). Two-Eyed Seeing and other lessons learned within a co-learning journey of bringing together indigenous and mainstream knowledges and ways of knowing. Journal of Environmental Studies and Sciences, 2(4), 331–340. https://doi.org/10.1007/s13412-012-0086-8

Global Indigenous Data Alliance. (2019). CARE Principles for Indigenous Data Governance. https://www.gida-global.org/care

U.S. National Science Foundation. (2024). Principles for the conduct of research in the Arctic. https://www.nsf.gov/geo/opp/arctic/conduct.jsp

Navajo Nation Human Research Review Board. https://www.nnhrrb.navajo-nsn.gov/

Australian Bureau of Statistics. (2025). Governance of Indigenous Data Framework. https://www.abs.gov.au/about/aboriginal-and-torres-strait-islander-peoples/governance-indigenous-data-framework

Indigenous Services Canada. Indigenous AI Fund. https://www.sac-isc.gc.ca/

Lorde, A. (1984). The master’s tools will never dismantle the master’s house. In Sister outsider: Essays and speeches (pp. 110–114). Crossing Press. https://theanarchistlibrary.org/library/audre-lorde-the-master-s-tools-will-never-dismantle-the-master-s-house

Davis, J., & Stiver, W. (forthcoming). Scaling equity: Leveraging AI in qualitative research to amplify marginalized voices. Emerald Publishing.

About the Authors

Jarrett Davis, MA is a Senior Research Scholar with the Global Association of Human Trafficking Scholars (GAHTS) and co-convener of up! Collective. His fifteen years of research across Southeast Asia and the United States has focused on how dominant data frameworks render vulnerable populations — boys and young men, transgender individuals, and street-connected children — structurally invisible.

Wendy Stiver, RN, CCM, BSN, MA is a Research Scholar with the Global Association of Human Trafficking Scholars (GAHTS). A registered nurse and published author on trafficking and gender-based violence, her work is grounded in transcultural inclusion and social justice. She is currently exploring the ethics of AI in qualitative research.